AI Changes Everything But Not How You Think

Human Cognition Is Still the Constraint

In seventh grade I went on a field trip to the General Motors assembly plant in Framingham, Massachusetts. Our social studies class had been studying the industrial revolution — the productivity gains and the displacements both. (Hat tip to Dr. Martin Sleeper for teaching us to think critically rather than just admire.)

What nobody mentioned was that the plant was already dying. A few years later Michael Dukakis would hold it up as proof of the Massachusetts Miracle — the economic revival that became the centerpiece of his 1988 presidential campaign. That particular plant had become a symbol of American industrial resurgence at the precise moment it was past saving.

Years later I read The Machine That Changed the World. Jim Womack and his colleagues had spent years studying that exact plant. It was their central example of everything wrong with the industrial model. Overstaffed. Inflexible. Producing defects at a rate that lean manufacturers in Japan had made unthinkable.

The plant closed in 1989.

I’ve been thinking about that gap ever since. Between the symbol and the reality. Between the confident presentation and what was actually happening underneath it. Between the system working perfectly and the system working.

When ChatGPT arrived in November 2022 the reaction was nearly universal. Transformative. Revolutionary. The moment everything changed.

My reaction was different. I thought it was impressive. And then I thought — all I can picture is two AIs talking to each other.

It wasn’t a breakthrough in human understanding. It was more generation, more output — more of the thing we already had too much of, produced faster and formatted better.

I had seen this movie before.

I wrote about this pattern last year using an example from my own professional past. In the 1980s CAD/CAM arrived with the same energy AI carries today. Engineers would think differently. Products would be conceived differently. The creative act of design would be fundamentally transformed.

What actually happened was more instructive. CAD/CAM accelerated the wrong layer. Drawing got faster. Decisions got slower. Dependencies exploded. And as one engineer from that era put it — once everyone used the same CAD system, designs started looking the same.

The tool got better. The thinking stayed the same. The biology didn’t move.

AI is repeating this at a scale CAD/CAM never approached. Not just in one discipline but across every form of knowledge work simultaneously. More generation. More output. More of the thing we already had too much of, produced faster and formatted better. And underneath it — the same unexamined assumptions about what knowledge is, how it moves, and what it’s for.

The legal disclosure is the perfect metaphor for what we have built.

Lawyers write it for other lawyers. It gets updated when a policy changes or someone finds a loophole — not when users fail to understand it. The person clicking “I agree” was never really the intended audience. And it isn’t genuinely negotiable — you can decline to agree, which means declining to use Chrome, or Gmail, or any of the infrastructure modern life runs on. Even regulations designed to protect users get captured by the same logic. GDPR was meant to restore meaningful consent. It produced the cookie banner — a popup that interrupts everyone thousands of times a year to obtain permission that nobody meaningfully gives. The compliance theater replaced the protection it was supposed to provide.

The disclosure performs informed consent without producing it. It feels responsible. It protects the institution. It communicates nothing to the person it was nominally written for.

AI meeting summaries work the same way. Accurate. Formatted. A confident record that the meeting happened and that things were said. The tension in the room — the idea that almost got said, the moment when someone’s face changed, the thing everyone understood but nobody wrote down — none of that survived the summarization. The left hemisphere produced a perfect record of what the right hemisphere actually understood.

We did not get more productive. We got better at generating the appearance of productivity.

Here is what I think is actually happening.

We are still running a model of knowledge work that emerged from 1913 and was never questioned.

In that year Henry Ford’s factory in Highland Park demonstrated something the world had never seen — work decomposed into repeatable steps, optimized, measured, and scaled. Frederick Taylor had provided the intellectual framework. Ford made it undeniable. What followed was not just a manufacturing revolution. It was an invisible template for how every organization on earth would come to think about knowledge, decisions, and coordination.

There is a detail about 1913 that matters enormously. The physical systems — the assembly line, the division of labor, the measurement apparatus — were deliberately and precisely engineered. The knowledge architecture underneath them was never designed at all. Nobody decided how understanding would travel through the organization. How meaning would move from the factory floor to the boardroom. How assumptions would get surfaced and questioned. That part accumulated. It was assumed. It became organizational common sense.

There was a reason nobody noticed the gap. The hierarchy filled it. Information traveled up one chain of command and down another — and for most of the industrial era that was sufficient. The environment was stable enough, decisions were slow enough, and information was scarce enough that the org chart functioned as a knowledge system. It wasn’t designed as one. It just worked as one, well enough, for long enough that nobody thought to ask whether something better was possible.

That condition no longer holds. It hasn’t held for decades.

Slack, Teams, and email created informal channels that work around the hierarchy for day to day coordination. That’s genuinely useful. But they didn’t replace the hierarchy as a knowledge system. They created a parallel layer alongside it. Now you have the formal hierarchy still making decisions based on information that traveled up and down the official chain — distorted at every handoff as before. And underneath it a vast unstructured layer of threads and messages and email chains where the actual thinking happens, the real decisions get pre-made, and the context lives that never makes it into the formal system.

Two patchwork emergent knowledge systems. Rarely talking honestly across the divide.

The hierarchy has the authority. The informal layer has the understanding. The gap between them is where organizational disasters quietly incubate. The night before the Challenger launch, the engineers who understood the O-ring risk and the managers who approved the launch were looking at the same information. They interpreted it differently — because different incentive structures were bearing on them. The knowledge system had no mechanism for surfacing unwelcome truths to people with strong reasons not to hear them. I’ve written about this in more depth here.

We inherited this whole. Not as a blueprint that could be revised. As the invisible water we swim in.

The industrial model then migrated further. Into job descriptions. Org charts. Performance metrics. Knowledge bases. Ticketing systems. And into the education system that produces the people who run all of those organizations.

Not because anyone decided to teach compliance. The reward structure did it quietly. Education is built around demonstrating that you’ve absorbed the existing explanation — finding the right answer, the one the teacher already knows, the one that gets graded. Generating better explanations is mostly irrelevant to whether you succeed. Nobody tells you not to ask questions. The system just makes asking questions mostly beside the point.

David Deutsch would call this teaching people to memorize rather than to conjecture and refute. The factory needed people who could do the former. The age we’re entering desperately needs people who can do the latter.

AI doesn’t fix this. It deepens it.

Every assumption baked into the industrial model gets more embedded, more confident, and harder to question. Bad explanations get generated at volume. Wrong questions get thoroughly answered. The organization mistakes fluency for understanding and output for insight. The factory doesn’t run faster. It runs deeper into the wrong direction.

And this is where the title of this piece means two things simultaneously.

AI changes everything in your organization’s environment. Your inbox. Your meetings. Your competitive landscape. Your industry’s economics. The transformation is real.

But it changes nothing about how human beings actually think. The biology hasn’t moved. The same brain that struggled to make meaning in 1913 is struggling to make meaning now — the same cognitive limitations, the same capacity for motivated reasoning, the same tendency to mistake fluency for understanding. Iain McGilchrist spent two volumes demonstrating that the western mind has been systematically suppressing its own capacity for contextual, relational, meaning-making intelligence. That suppression didn’t get a software update in November 2022.

The gap between a transformed environment and an unchanged biology is where the real problem lives.

Most organizations are running on explanations that accumulated over the last century and were never examined.

The industrial model wasn’t just a management technique. It was an explanation of what knowledge is, how it moves, and what it is for. Capture it. Codify it. Control it. That explanation made sense when information was scarce and coordination was expensive. It makes no sense now. And AI doesn’t update the explanation. It just runs it harder.

Every explanation is provisional. The best available understanding at a specific moment, always replaceable by a better one. The industrial model was a genuinely good explanation for its time. The problem isn’t that we adopted it. The problem is that we stopped looking for what comes next.

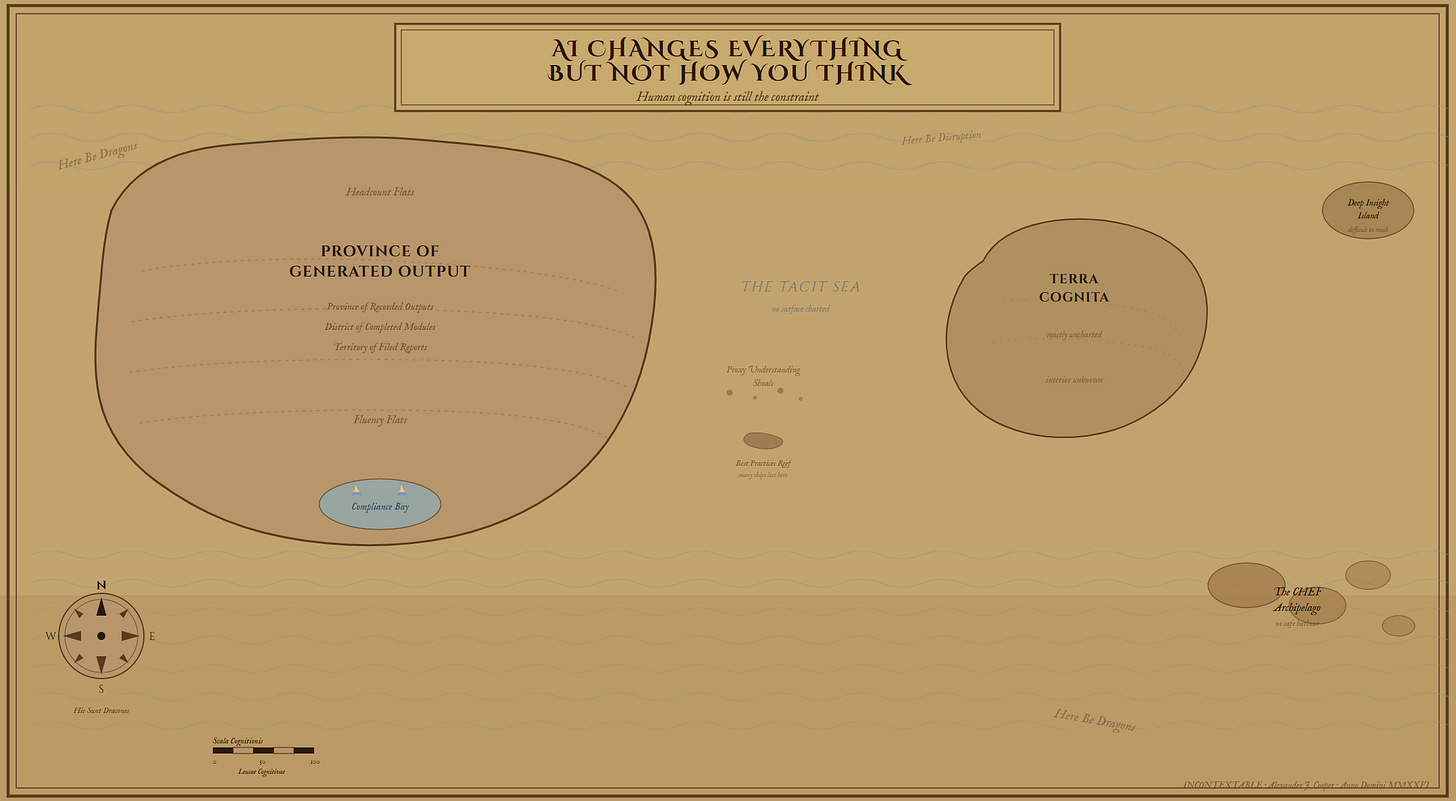

That is what Best Practices really means. We found an answer and stopped asking the question.

We are at a precipice

A small number of organizations will recognize the sorting event for what it is and ask the question the industrial model was never designed to answer: what would knowledge work look like if we were designing it today, from scratch, for the world we actually live in?

The rest will let Microsoft decide what knowledge is worth capturing. They will let Google determine what connections are worth surfacing. They will let OpenAI define what a good answer looks like. Those are not neutral technical decisions. They are the most consequential strategic choices of the next decade, being made by people whose interests are not yours, at a moment when most organizations don’t realize the choice is being made at all.

We remember the Amazons. We forget the Kmarts.

The foundational claim of the work I’m developing is simple.

Organizations treat knowledge as permanent. It never is.

Every piece of organizational knowledge is a best available explanation at a specific moment in time. It carries assumptions that decay without anyone noticing. It fractures as it travels. It hardens into policy and process and eventually into something nobody questions because nobody remembers it was ever a choice.

The Framingham plant closed because the explanation it was built on stopped being true. The people running it had every incentive to believe otherwise. The data they were looking at told them things were fine.

New organizations have always had one advantage — they get to start with modern technology rather than inherit their predecessors’ decisions. That same opportunity now exists for knowledge architecture. Most organizations are running information systems built on industrial era assumptions. The question is whether anyone is willing to build something optimized for how knowledge actually behaves.

That is the argument. The book that develops it .is coming. If you’ve been watching the gap between what the system says is happening and what you know is actually happening — you’re in the right place